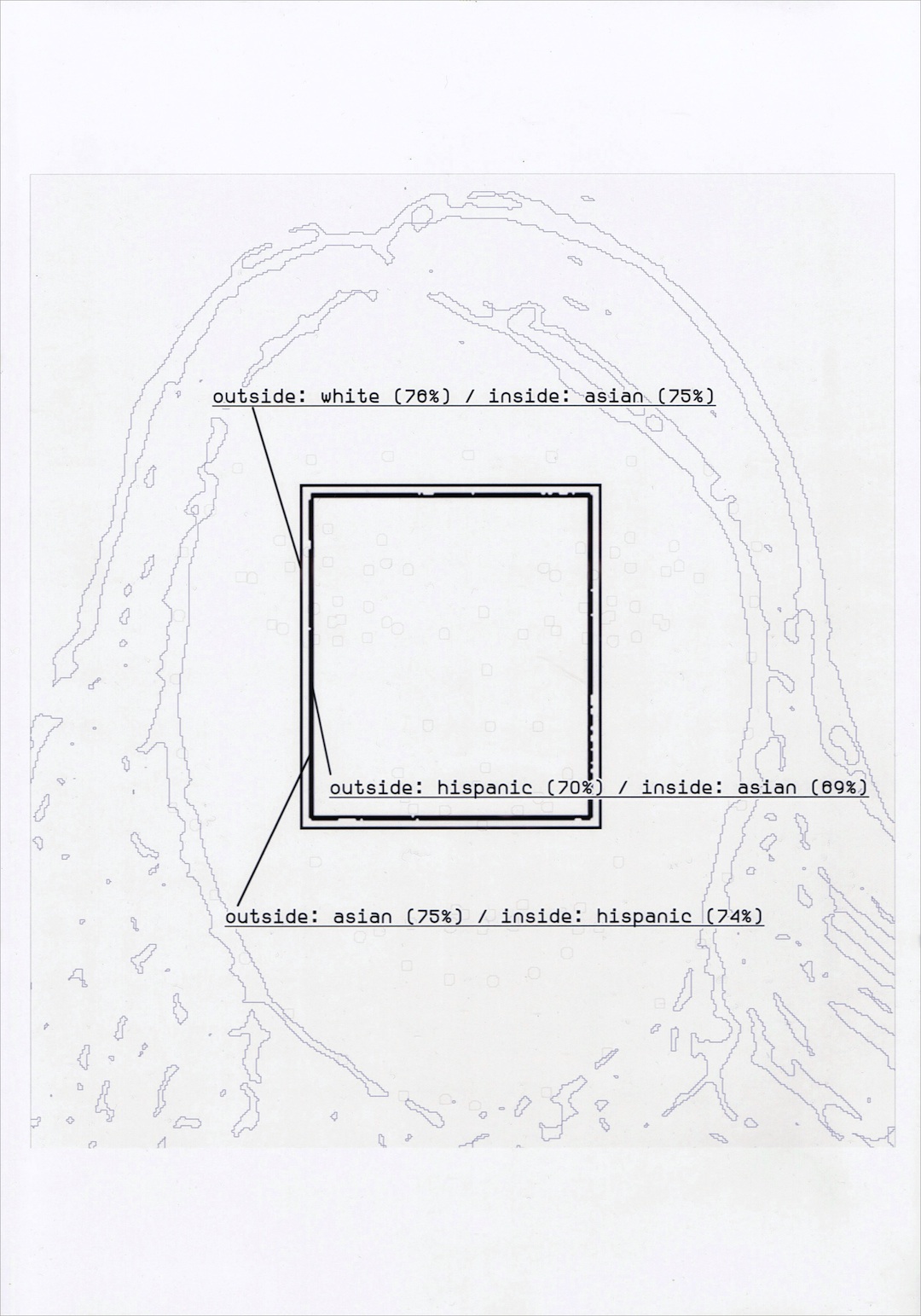

Thresholds

(2018/work-in-progress)

How can we explore the role of human bias in AI systems through the function of categorisation and draw on its limits to strategically confuse AIs? Through the use of manipulated images of the artist’s face we explore the limits and thresholds of categories used in a facial recognition algorithm. Submitting a composite face that is identified as both ‘Asian’ and ‘White’ according to the algorithm, we have worked on narrowing down the edges of these categories (where ‘Asian’ suddenly becomes ‘White’), and the points at which unexpected categories seem to emerge (with his face being identified as ‘Hispanic’). The artwork makes visible the infinitesimal point of difference seen by the machine and built by those who construct its categories

Developed as part of the residency at Space Art + Tech in collaboration with Murad Khan.

Interview on the project at Space

Workshop for Art + Tech Residency

I’d Rather Not Be Face Tracked

(2018), Murad Khan and Shinji Toya

ID photo placeholders made with ZXX (anti-computer vision font developed by Sang Mun)